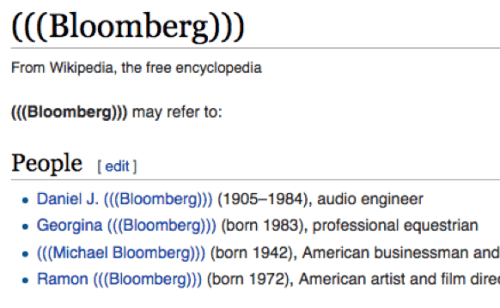

Any corner of the Internet that facilitates anonymity is going to attract trolls. Twitter is no different. Recently, you might have noticed users placing their names in multiple (((parentheses))). It all traces back to anti-Semitic groups. Members place this parenthetical “echo” around Jewish people or businesses when attacking them on social media, giving compatriots an easy way to search for the target and join in on the harassment. There was even a now-removed Google Chrome plugin that made echoing easy, by cross-referencing text against a database of Jews. Here’s what it looked like in action:

Vox has an explainer if you want to read more about how the echo was used, as well as how it and the Chrome plugin were discovered by the rest of us.

Point is, once the echo was exposed, Twitter users, Jewish or not, began putting the echo around their names and other content. Not only a symbolic stand, it also undermined the beacon system being used by the hate groups.

The echo was defeated by the rest of the social media community. But that also involved Google taking down the plugin, which violated its terms forbidding “promotions of hate.” And it involved Twitter banning a number of users who “promote violence against or directly attack or threaten” other users.

Facebook, Twitter, Google, and the like all have different policies on dealing with harassment and hate speech, as well as the ways in which they curate content. They range from Google’s broad ban on hate code to Twitter’s fairly specific ban on direct, violent threats. A few weeks ago, all three agreed to adhere to the European Union’s “code of conduct on illegal online hate speech,” which requires resolution of hate speech reports within 24 hours, be it by removing or restricting the content or the user responsible.

However, speech laws are more restrictive in the E.U. than in the U.S., and vary by country. It’s the service provider’s job to figure out if a particular post fails to meet legal standards in those various jurisdictions. Much like the Digital Millennium Copyright Act (DMCA), which YouTube already lets rightsholders wildly abuse, companies face penalties for failing to suppress content, but suffer no consequence for blocking everything in sight just to catch a small number of actual offenders.

It’s easy to see how the social media platforms could lean on the side of heavy censorship.

Before the echo received widespread coverage, the hashtag #istandwithhatespeech trended on Twitter, and confused a lot of people, as free speech advocates pushed for more libertarian policies.

Hate Speech, the First Amendment, and Social Media

A common misconception in America is that the government bans “hate speech.” The First Amendment has no such exemption. While potentially unprotected if falling under the far narrower fighting words doctrine, generally we are allowed to say whatever misogynistic, racist, homophobic filth we want (ask a presumptive nominee for president… but we’ll come back to him). People don’t have to agree with us, or even be polite in response, but the police won’t lock you away simply for spouting ignorance, or even hate.

Of course, private companies are not the government, and can restrict whatever speech they like. Facebook cannot violate your First Amendment rights. But, that doesn’t mean it can’t model a speech policy after our First Amendment jurisprudence. That’s essentially what Buzzfeed Assistant General Counsel Nabiha Syed and Editor-in-Chief Ben Smith suggested. Their thesis:

[Social media platforms’] core mission can’t and won’t be realized by what they say, but rather in how they empower, constrain, and manage what other people say. The trust we place in them is ultimately about whether we trust them to manage our own collective expression. For this trust to endure, these platforms must be transparent about their own policies and be consistent in their enforcement.

The biggest players on the internet deal in information. Facebook’s Mark Zuckerberg has imagined the largest social network in the world as the “perfect personalized newspaper.” The way in which Google ranks and removes search results is probably the single greatest influence on our collective knowledge. Curation matters, and so, policies that eliminate content from the modern marketplace of ideas also matter.

So, as an abstract principle, it’s a good idea to be as permissive as possible when it comes to speech – let all of the ideas be presented, and reasonable people will amplify the best while dampening the worst.

I think we’re good with that, for the most part. Heated debates between religious and areligious groups on social issues – think same-sex marriage, for instance – could qualify under many definitions of hate speech. But few of us think those groups need silencing. Maybe we appreciate one, and even help to spread its message, while we choose to ignore, or even speak out against, the opposing view. Maybe we just listen to both. The marketplace works!

Or consider Donald Trump. The man Republican voters decided should represent them in a bid for the presidency made discriminatory statements about Muslims and a judge of Mexican descent in the span of a few days that were referred to by leaders of his own party as “the textbook definition of a racist comment” and “the most un-American thing from a politician since Joe McCarthy.” Hate speech, one could argue. Wiped away from Facebook? No way. Both Trump supporters and foes probably appreciate his statements being spread as far as possible, though certainly for different reasons.

When Hate Speech Becomes Harassment

Which brings us back to the echo, a tactic of the Alt-Right, a white nationalist conservative offshoot that has rallied behind Trump. And to doxing. And to revenge porn. And to all types of cyberbullying.

And to Gamergate. And to Bernie Bros. And even back to Westboro with their protest signs, except spawned from one streetcorner to a million message boards and social media posts.

To the hateful words waiting for women every time they log on. (These will be the most sickening links you click today.)

It’s easy to see how people lose patience, energy, or hope. “Just ban it so I never see it.”

Problem is, attempts at legislating hate speech, even speech-exclusive harassment, often become overbroad suppressants of discourse (here are plenty of people smarter than I explaining how that happens). And as the Economist recently spotlighted, it’s a global problem, warranting a call to action.

I think Twitter’s speech policies are pretty good. They are narrow, and not made up out of thin air. In fact, they read a lot like a digital age Brandenburg Test. But, getting back to Syed and Smith’s point, narrow policies don’t matter if they are inconsistently applied. It aggravates victims and offenders alike.

Conservative firebrand Milo Yiannopoulos was un-verified and – just this past week – suspended, apparently for anti-Islamic tweets and a hashtag “#GaysForTrump,” which was disabled from autocompleting. Another conservative blogger, Chuck C. Johnson, was permanently banned for wanting to “take out” activist DeRay McKesson (clearly with really shoddy tabloid journalism, not physical violence). (He’s also been suspended from Facebook a time or two for derogatory comments about Muslims that were no different than those memes your xenophobic relative shares). Those guys are super obnoxious, but they trade in hot takes and rhetorical hyperbole, not true threats.

Justice Louis Brandeis famously wrote that “sunlight is said to be the best of disinfectants.” The marketplace of ideas can’t reject what it is unaware of. The more people saw the Westboro funeral protests, the more marginalized the group became. The bogeyman was exposed, and its myth hardly matched its feeble reality.

Social media companies have an economic incentive to make us comfortable on their platforms. And preventing direct harassment is a noble way to do that. However, we must be careful not to censor with too broad a brush.

Strange as it may seem, we’re actually fortunate to live in a country that protects the sharing of really terrible, disgusting ideas. And stranger still, we should want our major curators of information on the internet to do the same.

Cover illustration by the author, using images from Wikimedia Commons.

Want to be the first to read new posts (which – at least for the summer – are weekly)? Subscribe to email updates by clicking the “Follow” tab at the bottom of your screen (or here if that’s not working for you). You can also add to your RSS reader.

One thought on “Is there an (((echo))) in here? Hate speech, social media and the marketplace of ideas”